Projects

Our primary focus is linguistically-informed Neural Natural Language Processing. Towards that end, we are working on various funded projects, as described below.

Memory

The main mechanisms that are currently used for memory in LLMs — the model parameters, long context and RAG — all have drawbacks. In comparison, the memory systems of biological systems, including humans, are much more powerful. We are interested in creating memories that support continuous updating, yet are fully integrated into the main model.

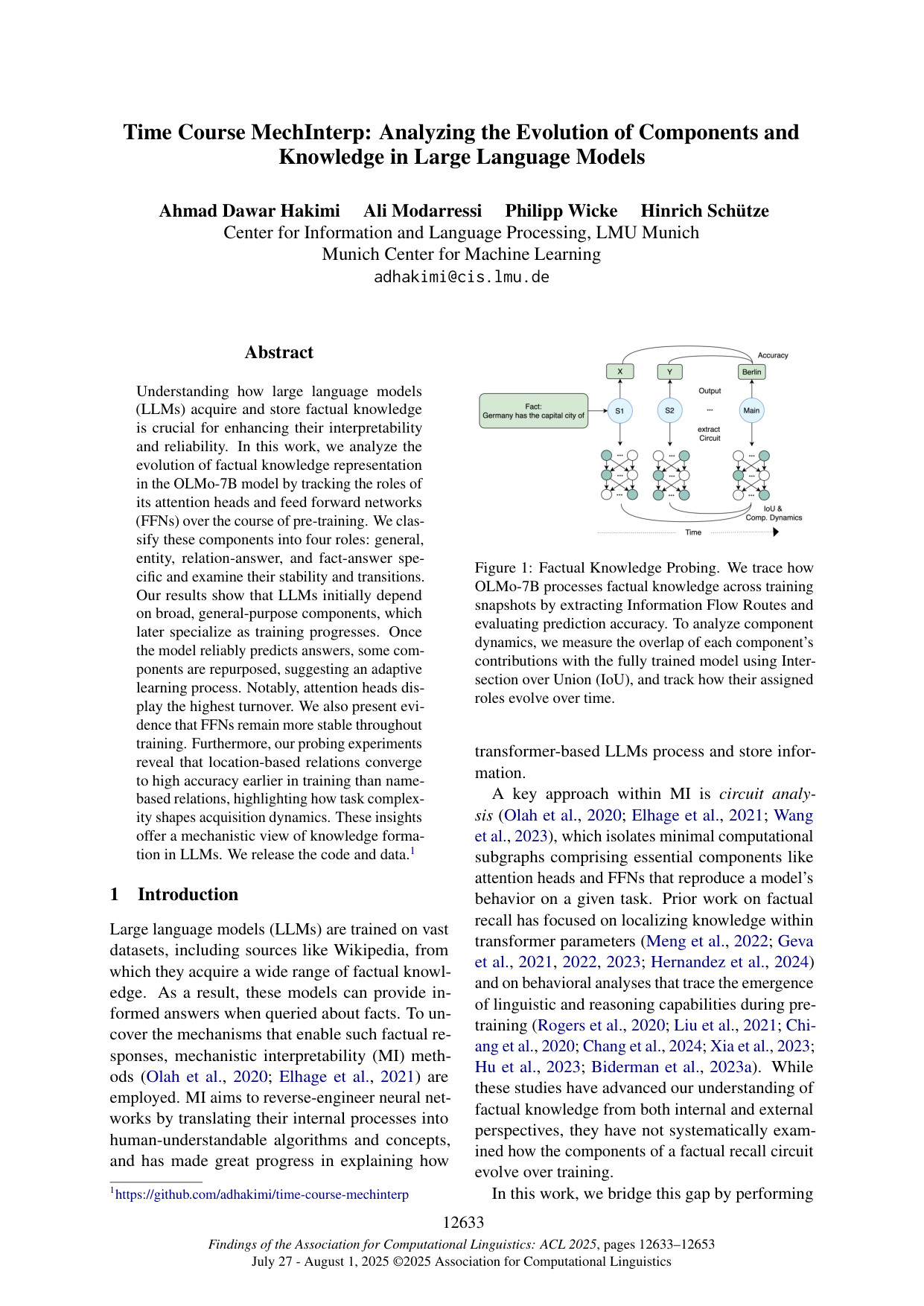

Interpretability

We currently have only a limited understanding of how LLMs work — they still largely are black boxes. To improve interpretability is important for safety, for high-stakes applications like medicine and as a basis for high-quality deep learning research, which is currently difficult given our reliance on heuristics and trial and error. We are working on models of the internal mechanisms of LLMs for phenomena like bias and factual knowledge, with particular focus on how these emerge during training.

Multilinguality

Current AI models have impressive performance for high-resource languages like English and Chinese, but there are thousands of low-resource languages that they perform poorly on due to lack of training data. To keep these low-resource languages alive, it is essential that AI understands and speaks them. We were the first to train language models with a broad coverage of several 100s of low-resource languages, we created GlotLID, one of the leading packages for language identification (a crucial part of multilingual infrastructure) and are working on elucidating the mechanisms and representations in LLMs that link words and meanings across languages.

Agents

Many researchers see the ultimate goal of AI research as developing autonomous agents that independently work on tasks for long periods of time and then deliver a finished product to their human (or agentic) manager. In contrast, we believe that human-AI collaboration should instead be collaborative, at least for a large subset of sensitive domains such health, jurisprudence and cybersecurity. One reason is that alignment to human values is imperfect, especially for long periods of sequential decision making. Another reason is that in many cases only the human has full understanding of the real-world context, which necessitates that the human guides the agent as it solves tasks. We have developed the HAI-CO2 framework to realize this vision of human-AI collaboration and are currently developing it for cybersecurity applications.